Introduction

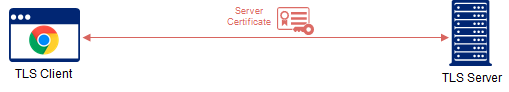

The SSL/TLS protocol was designed to secure the communication (confidentiality and authenticity) between only two parties.

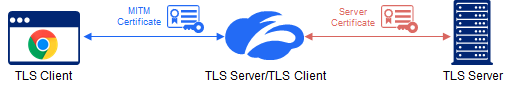

However, the continuous abuse of the same standards that were used to protect user privacy (instrumental in scaling the internet to where it is today) by bad actors, has created a de-facto need to add a 3rd party to the mix - a trusted inline SSL inspection service.

SSL inspection is also known as ‘Trusted’ MITM (man-in-the-middle) and SSL proxy

While the introduction of a trusted 3rd party is vital for security, it also inherently introduces risk.

With great power comes great responsibility

To earn the trust of our customers, we have to not only assume the responsibility of acknowledging and enumerating the risk factors, but also ensure that we deliver a superior product that mitigates the risks.

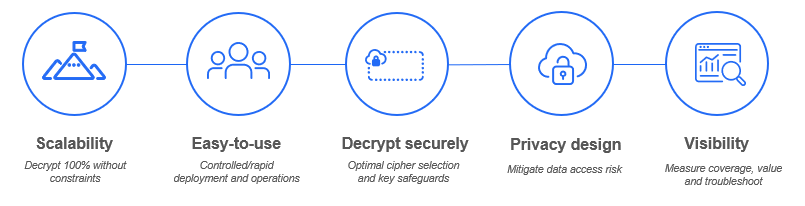

This blog post will describe the following key pillars of a superior SSL inspection solution that maximizes your security posture while minimizing risk and ensuring great user experience:

But, first, it is important to understand the mechanics of the SSL inspection process.

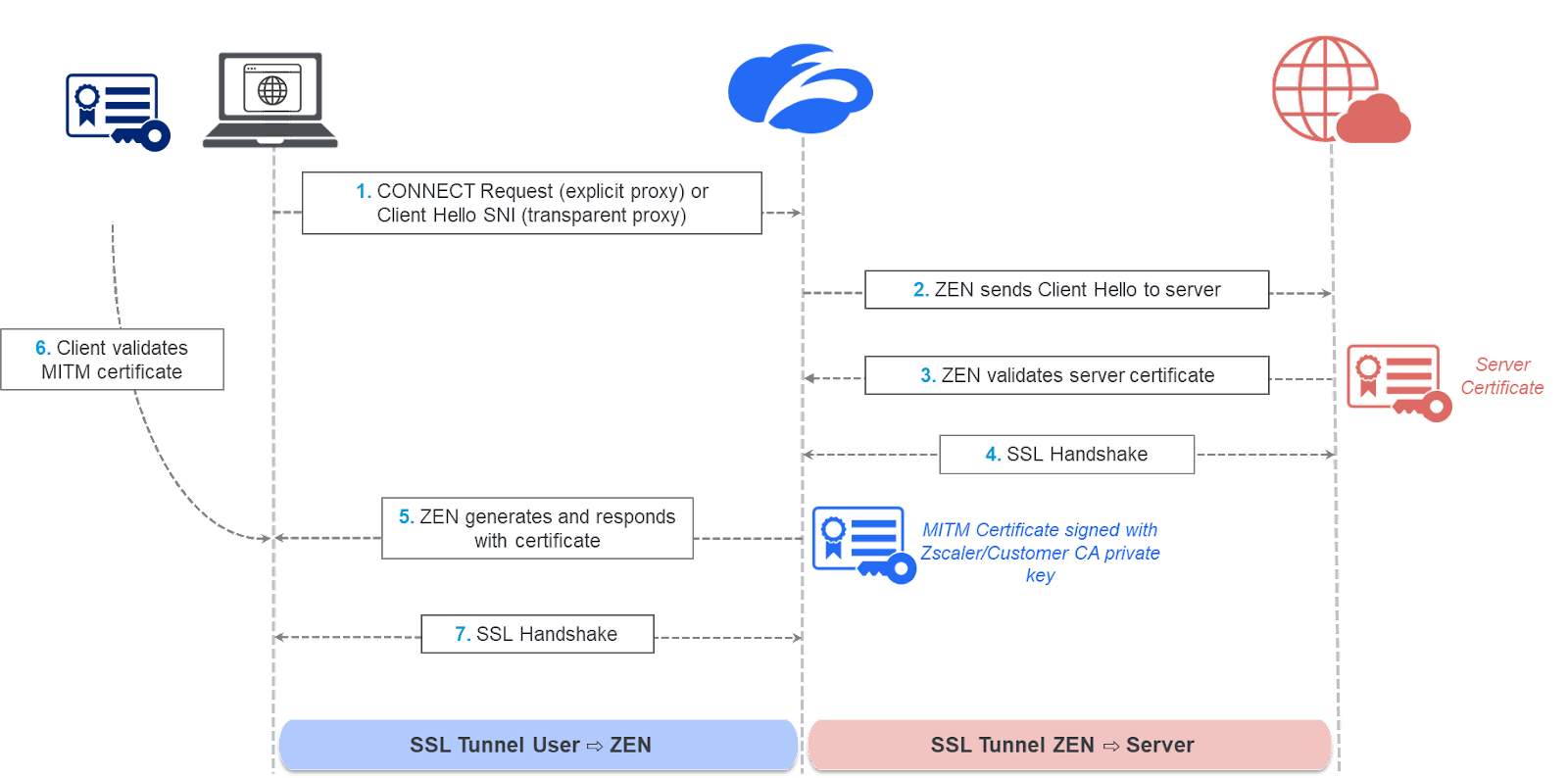

SSL inspection workflow

The ZIA Service Edge proxies the TLS connection by assuming the role of a TLS server to the TLS client (end-user application) and of a TLS client facing the destination TLS server. The service completes a server-side SSL handshake with the server, agreeing on a symmetric session key used to encrypt/decrypt the traffic on the server-side and validating the server’s certificate, similar to what your browser would do. Further, the service generates a domain certificate that looks like the original certificate, but is signed using the Zscaler intermediate CA or a customer intermediate CA private key. The service then sends the certificate to the client for validation and completes a client-side handshake, agreeing on a different symmetric session key used to encrypt/decrypt the traffic from on the client side.

Pillar 1: The right architecture for scale

Your SSL inspection solution has to scale to decrypt 100% of traffic at line rate. No compromises here. Line rate is critical to ensure great user experience and 100% is key to avoid blind spots.

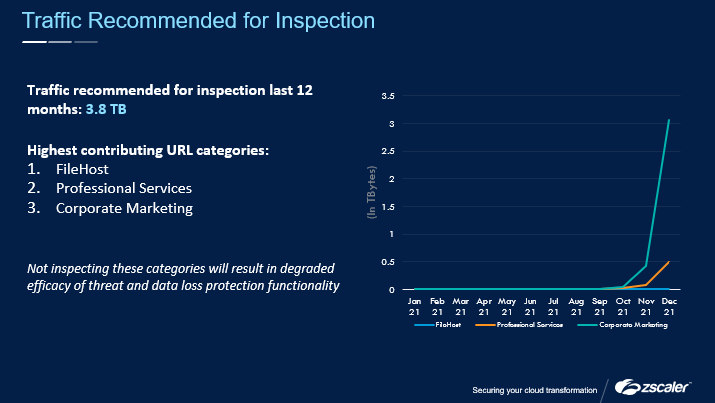

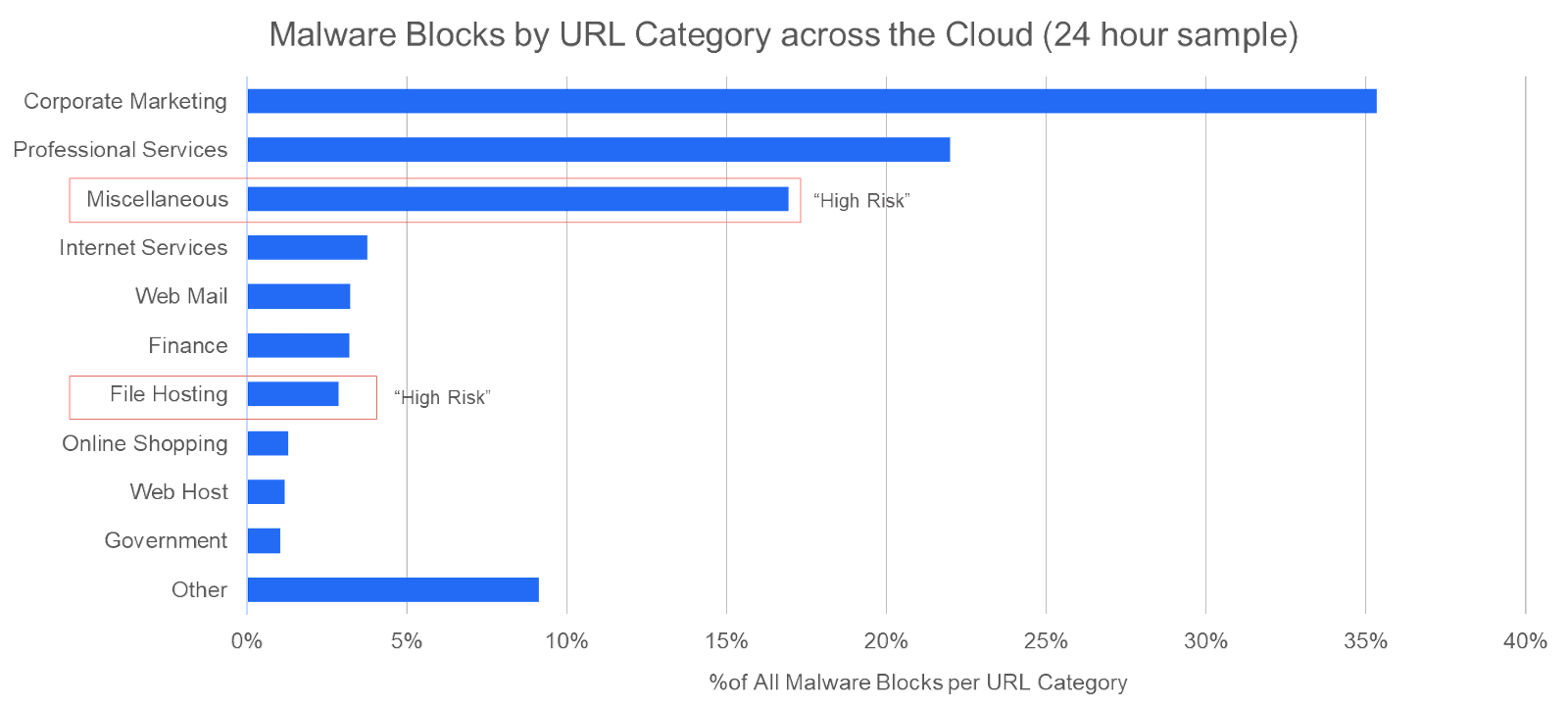

This is where capacity-constrained legacy NGFW appliance vendors suffer the most and compromise either on coverage or on user experience. To illustrate their struggle, it’s not uncommon to see legacy NGFW vendors publish deployment best practices for decrypting only “high risk” URL categories, while trusting the others.

This is not only ironic (How is that for zero trust?), but also risky (literally). This advice puts a customer in a dystopian scenario where risk is unchecked, unmanaged and unmitigated. Zscaler is observing malware payloads across all URL categories, even those considered low/medium risk by the NGFW vendors:

The only way to achieve true scale is through a multi-tenant purpose-built SSE solution with custom-built SSL-accelerated hardware.

Pillar 2: Easy-to-use

While in an ideal world, you would just flip an SSL switch and everything would automagically work, in reality, risks due to various privacy regulations/work councils and incompatible applications/devices need to be managed for a successful roll out.

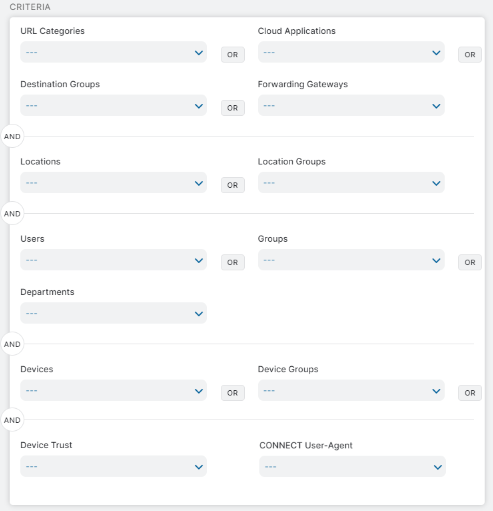

The single most important capability-set here is a robust rule-based SSL inspection that allows you to pick and choose which traffic gets inspected and which traffic gets exempted, based on a multitude of factors pertaining to the source and destination:

Zscaler’s rule-based engine, supporting over a dozen criteria, lets your creative juices flow. Just to name a few common examples:

- Managing SSL inspection levels based on varying data privacy regulations

- Managing SSL inspection levels based on the device and OS type

- Managing SSL inspection for applications based on user agent context (native application vis-a-vis browser)

- Excluding IoT device traffic from SSL inspection

- Selectively decrypting certain O365 applications

Thanks to the Zscaler Client Connector, all traffic originating from a managed device (decrypted or undecrypted) can be attributed not only to a specific user/group, but also to a specific device and OS type. This ensures consistent user-based policy enforcement and an ability to distinguish between managed and unmanaged devices for smart policies, such as exempting IoT traffic.

Pillar 3: Secure decryption

When opening up encrypted traffic, it is vital that the security of the end-to-end connection is not degraded and that all sensitive cryptographic key material is safely handled according to industry best practices.

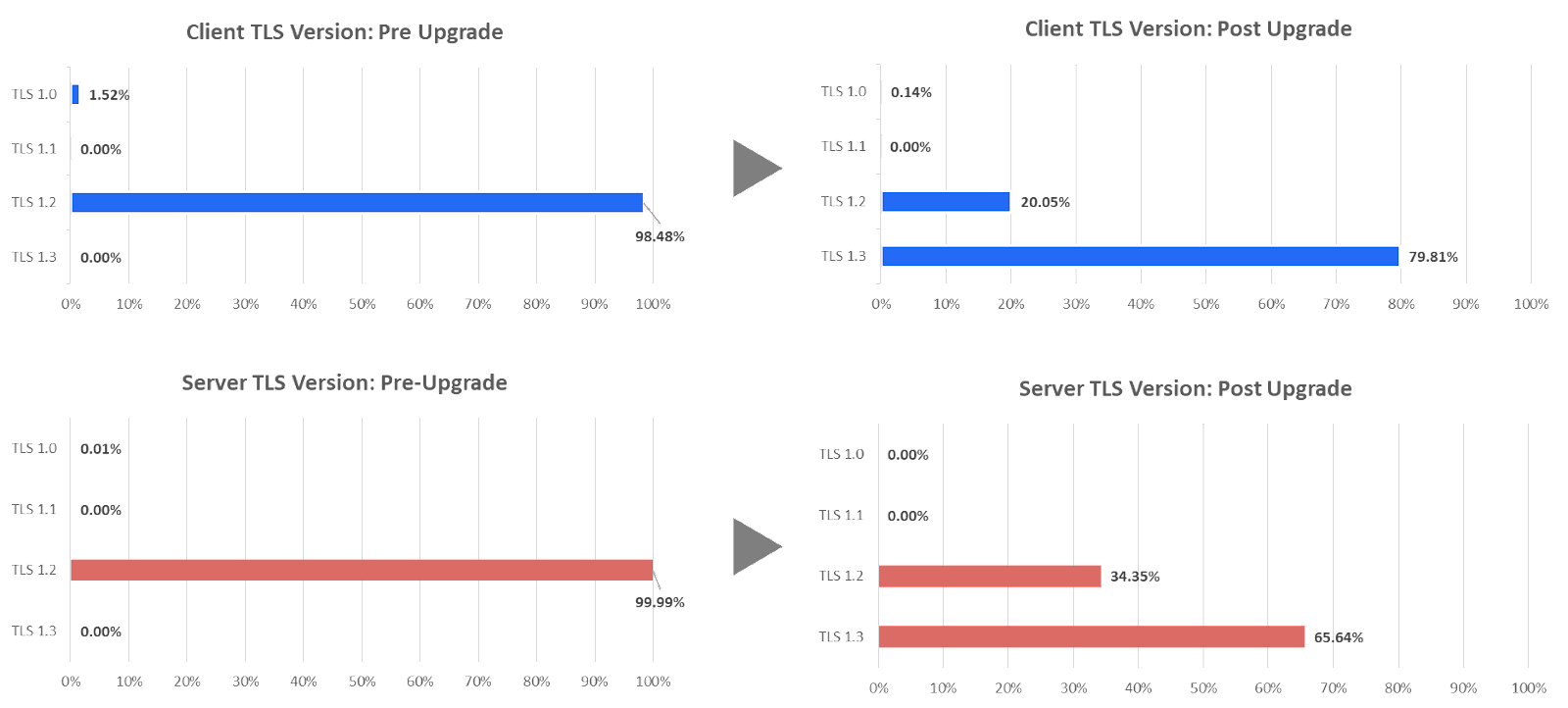

Pillar 3a: Optimized ciphersuite and TLS version selection

This tends to be overlooked due to the complex nature of ciphersuite variants. The SSL inspection solution must guarantee that ciphersuite strength is equivalent or even stronger than that would have been negotiated without SSL inspection. For example, if a client proposes a perfect forward secret ciphersuite (e.g. ECDHE_RSA_WITH_AES_256_CBC_SHA384), the SSL proxy needs to prefer it over weaker static RSA ciphers.

Zscaler’s design principle is to make this as simple and secure as possible - we always prefer the strongest ciphersuite that the client advertises and always propose a strength-prioritized list of ciphersuites to the server despite the added cryptography compute overhead.

The following chart illustrates this principle nicely through the Zscaler TLS 1.3 acceleration hardware upgrade that was completed more than a year ago. Once we flipped the switch, we observed, overnight, a major difference in TLS version negotiation both on the client side and server side. Such a significant upgrade was a no-event for a customer. Imagine that you had to manually upgrade hardware, OS, drivers on your legacy NGFW appliances to get through this.

Pillar 3b: Key Material Safeguarding

Zscaler offers two intermediate CA enrollment models: bring-your-own CA and Zscaler’s default root/intermediate CA. In the former, Zscaler acts as a key custodian on behalf of a customer and assumes responsibility to protect it.

While the widespread adoption of PFS (perfect forward secrecy) ciphers has mitigated the risk of passive eavesdropping, an active MITM attack is still possible. An issuing CA private key is like a key to the kingdom. If a bad actor gets hold of a private key, they can issue arbitrary forged certificates for trusted domains. In combination with DNS poisoning the bad actors can launch MITM attacks using certificates that appear trusted to the client.

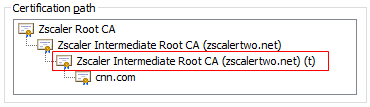

To mitigate this risk, Zscaler employs a robust array of key material safeguarding techniques - from short-lived keys, revocation endpoints, to stringent production access and audit along with other compensatory management, operational and technical safeguards. The highlighted CA below (t stands for temp), showcases Zscaler’s short-lived issuing CA that is automatically rotated on a weekly basis, significantly minimizing potential attack windows.

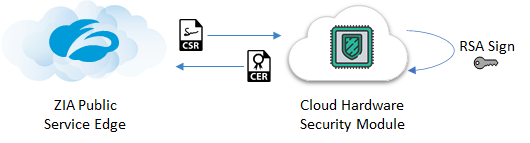

To further advance key protection even for the most highly regulated and security stringent organizations, Zscaler has recently launched in public preview a first-of-its-kind fully turnkey Cloud HSM (FIPS 140-2 Level 3 validated) solution for safeguarding our customers’ issuing CA private keys - the industry gold standard for key protection. In the fully integrated solution, the CA private key will reside for its entire lifetime inside the Cloud HSM and be used dynamically to sign domain certificates:

Stay tuned for more information.

Pillar 4: Design for Privacy

Opening up an SSL connection that was supposed to preserve user privacy inherently introduces risk to user privacy. The right architecture mitigates this risk.

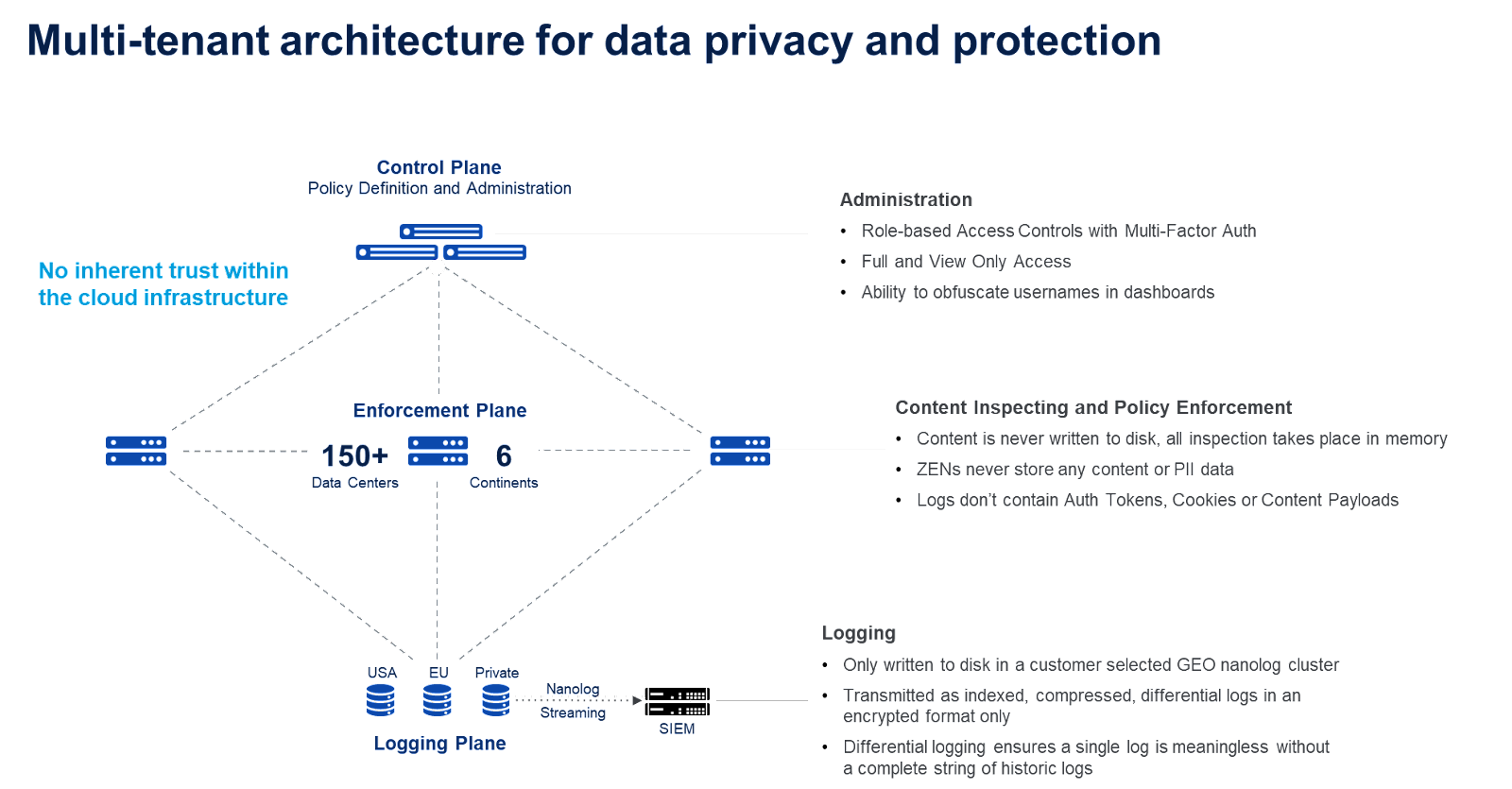

Security and privacy are at the core of Zscaler’s architecture. Our fundamental principle is that we should minimize the data sets that are collected, and secure them across the whole lifecycle - in-use, in-motion and at-rest. Details on the controls are illustrated in this image:

Don’t just take our word for it, all our information security controls are validated by independent 3rd party assessors against all the leading compliance/data privacy frameworks, including DOD IL5 (the US department of defense highest standard for cloud vendors). Zscaler also undergoes an independent Sensitive Data Handling Assessment that verifies documented encryption controls and client key management, while also validating that any stored key information is unexploitable through an examination of activity logs, core dump files and database schemas. To learn more visit here.

Pillar 5: Visibility

Comprehensive operational visibility is vital for both establishing trust and addressing several key questions:

- What is your SSL inspection coverage?

- Are there any user experience problems due to incompatible apps?

- Are you observing any obsolete TLS versions?

- Are you using the most secure ciphers?

- What value are we getting from the SSL inspection (i.e. threats, DLP incidents)?

The foundation to address these questions starts from the raw log data. A robust logging plane capable of capturing high-fidelity and context-rich transaction level logs at scale (not aggregate level that other vendors may resort to due to architectural deficiencies) is imperative. Zscaler’s Nanologs capture 18+ unique TLS log fields for each TLS connection (decrypted, undecrypted, and failed) as seen here:

Once you have the basics in place, answering the operational and value questions is straightforward.

Example 1: Failed client SSL handshake logs proactively surface misbehaving clients:

Example 2: Zscaler’s QBR reports recommend which categories should be inspected

Conclusion

SSL inspection is a crucial capability to protect users and corporate data, but since the original protocol was not designed to be inspected by a trusted 3rd party, it also introduces risk. Zscaler acknowledges and mitigates these risks through its purpose-built cloud-native SSE platform following 5 fundamental principles. Given that over 90% of Internet traffic is encrypted today, and malicious actors that include insider threats have leveraged the privacy provided by SSL to disseminate malware and exfiltrate data, inspecting this traffic becomes critical for preventing compromise and data loss, along with improving our survivability in the current threat landscape. While there are risks associated with performing SSL inspection, Zscaler has implemented controls, as outlined in this article, that has made this an acceptable level of risk for over 6,000 global customers. Risk-taking is fundamental to business. Without it, no business value would be created.